graph TD

A[ST segment elevated?] -->|yes| B((High Risk))

A -->|no| C[Chest pain main symptom?]

C -->|no| D((Low Risk))

C -->|yes| E[Other symptoms?]

E -->|yes| F((High Risk))

E -->|no| G((Low Risk))

Simplified Reasoning as Boundedly Rational

Workshop in Theoretical Philosophy, Graz

Thanks for your feedback!

Abstract

Goal: Spell out the normative consequences of approaching simplified reasoning from a tractability perspective.

Framework: Evaluate simplified reasoning using a simple yet powerful cost-benefit analysis. This is the consequentialist framework. Carefully justify: which policies of reasoning are available to limited agents, and what is the normative status of the verdicts.

Upshot: What bounded rationality demands from limited agents (the “ought” of bounded rationality) requires substantial effort from limited agents without predicting widespread irrationality.

1. The Problem

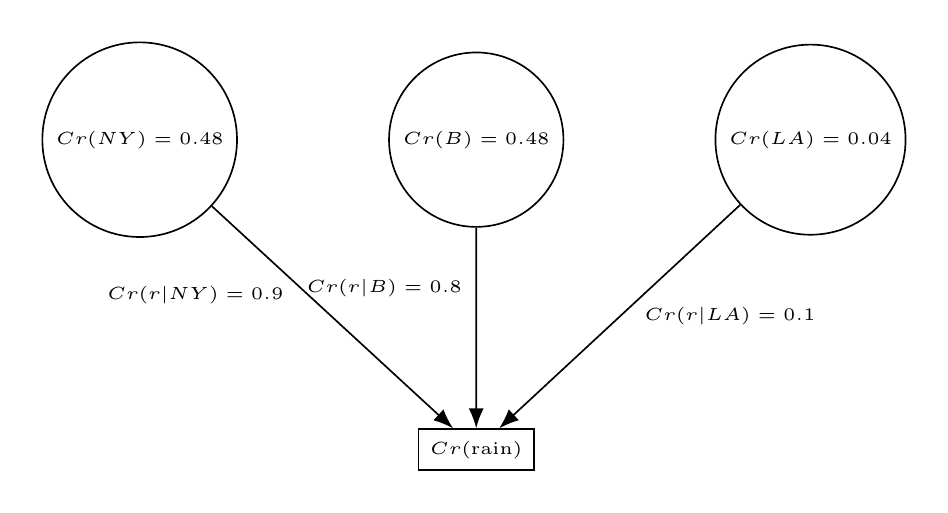

Classical example (Staffel 2019): Forecasting rain during an event. Given uncertainty of the event’s location, evidence-based credences are assigned to possible candidates. To simplify, a strategy is to screen-off the unlikely candidates, renormalize credences, and use the rain likelihoods only of the most likely ones to compute a weighted average formula.

Below, representation of the example:

How to characterize simplified reasoning for developing normative models? Try to do it in a very general way.

I will assume the following characterization of simplified reasoning:1

Intuitively, tractability refers to the feasibility under growing information of a given reasoning method.2

2 Tractability is formally defined in computer science and in artificial intelligence approaches to cognition. See Van Rooij et al. (2019).

If you impose certain constraints on selecting between two hobbies—coffee versus gardening—, it’s reasonably easy to select one—e.g., select the cheapest hobby that the majority of your best friends also like.

If you instead try to go over all parameters of each hobby—variety of flavors, potential long-term hedonic satisfaction, ethical considerations, health issues, probability of match with friends, etc.—, the decision space can grow so rapidly that even the best computers in the world would not be able to optimize this problem in a person’s lifetime.

Kinds of things that SR aims to include:

- Dimension Reduction: Simplifying by using fewer variables to input in a given reasoning problem. This includes having thresholds for screening-off epistemic possibilities or criteria for excluding options.3

- Problem Substitution: Simplifying by targeting a simpler problem.4

- Attitude Switch: Simplifying by reasoning with different attitudes. Examples: reasoning with rounded credences instead of strict credences, reasoning with beliefs instead of credences, reasoning with acceptance rather than belief, etc.

3 For the cases of epistemic possibilities see Staffel (2019); Ross and Schroeder (2014); Clarke (2013). For cases of excluding options see Tversky (1969); Payne, Bettman, and Johnson (1993); Simon (1956). My term assumption sets covers both the epistemic possibilities and the options that agents use in their reasoning.

Some remarks to narrow my target

- The dispositions to reason in certain ways are called policies (shorthand \(\pi\)).5

- Policies are the choice variables in the framework. When \(S\) reasons adopting \(\pi_1\) instead of \(\pi_2\), the framework assumes that it is at least in principle possible to choose between them.6

- The outputs of simplified reasoning can be doxastic (beliefs, credences) or conative (intentions, plans).

- The norms on the cognitive process of simplifying reasoning differ from the norms of their outputs. In my project, I tackle the rationality of the process.7

5 My approach to policies is as cognitive-behavioral dispositions to reason in a certain way. Roughly: cognitive functions that take a set of stimulus conditions (mental states, perceptual inputs, etc.) and map them to the adoption of a certain way of reasoning.

6 “…to reason with restricted assumption sets (\(\pi_1\)) instead of full-information sets (\(\pi_2\))…”

7 Some epistemologists think that knowledge or truth are norms of belief. On my view, this doesn’t necessarily commit them to taking those epistemic goods as normative standards for the cognitive dispositions that make reasoning tractable.

My target topic today is the rationality of SR:

Question: What is rationally required from an agent disposed to simplify her reasoning?

2. The Framework

What is a good framework for developing normative models of SR?

The consequentialist framework gives a way to approach different cases of simplified reasoning.

It says that a reasoning policy is assessed in a cost-benefit analysis that gives a net value to each policy.

The policy of reasoning that maximizes this value is minimally interpreted as the one that guides a rational assessment of the agent at a situation.8

8 Formally, a reasoning policy in a set of reasoning policies tractable for subject \(S\), \(\pi_i \in \Pi_S\), is evaluated according to its net value \(V(\pi_i)= U(\pi_i) - C(\pi_i)\) that trades-off the utilities and costs of using that policy. The policy that the agent ought to implement is the one that maximizes the net value: \[\pi^{\star} = \arg\max_{\pi_i \in \Pi_S} V(\pi_i)\] This is a simple yet powerful approach advocated in the resource-rationality tradition (Chater and Oaksford 1999; Icard 2023; Lieder and Griffiths 2020; Griffiths, Lieder, and Goodman 2015). See discussion in Rich et al. (2020).

Answer to [A]: Pay close attention to tractability, the function of simplified reasoning! (§3)

If we ignore tractability as a constraint, we risk including intractable policies in the set of choice variables.

Answer to [B]: Prima facie, what an agent ought to do at a reasoning situation is interpreted as a guide for rationally assessing the agent. (§4)

Primarily, this is theoretically fruitful for investigating rationality—but it also can help the agent to get advice and guide her improvement. There is flexibility interpreting the kind of “ought” at issue, more in §4.

In what follows, I give more careful answers to each question.

3. The Function of Simplified Reasoning

Why is tractability so central for interpreting a normative model?

(Given my notion of policy) Good strategy to argue for the centrality of tractability: show a general connection between tractable reasoning policies and what bounded rationality requires.

Argument.

[P1] Limited agents can simplify their reasoning making a resourceful use of their cognitive limitations.

[P1.1] Limited agents can reason in demanding situations by solving problems that seem difficult at first glance.9

[P1.2] Limited reasoners cannot reason as if they were computationally unlimited.10

9 E.g.: Finding a route, allocating personal budgets, playing games, all associated with tasks that are computationally intractable in general. See Macgregor and Ormerod (1996) for evidence of good human performance in the TSP.

10 Alternative (Cherniak 1992, 78): “immediate synthetic a priori intuition” or a “transcendental ego, outside space, time, causality, and so on”. But: these capacities don’t seem to count as explanatory.

[P2] If limited agents were not rational under their bounds, they could not exhibit the reasoning capacities we observe in common situations.

[C] Therefore, limited agents are rational under their bounds.

In short, if we don’t constrain the set of optional policies in reasoning to the tractable ones, then we lack a plausible explanation of dispositions to adopt reasoning-simplifying policies.

Result: What limited agents ought to do = adopt the policy \(\pi_i \in \Pi_S\) that best strikes the balance between its utility and costs = What bounded rationality requires.

Why is this argument compelling? → It helps to distinguish between:

In some situations: one would prefer to have better options. No matter what one chooses, one would end up complaining.

To fruitfully theorize about bounded rationality, we need to differentiate that every policy of reasoning comes with costs and that sometimes not all available policies result in negative values.

Fact 1:

Tractable policies impose costs

Fact 2:

Some sets of tractable policies can lead to bad predicaments. Sometimes: irrational.

4. Normative Robustness

Why are verdicts of a consequentialist treatment normatively robust?

Because, the consequentialist framework does double work by answering two questions:11

11 For a recent, similar view see Thorstad (2024).

- How should limited agents reason?

- Answer: The consequentialist framework: Among a set of tractable policies for reasoning, limited agents should select the best policy (i.e. the policy that provides the best net trade-off between costs and benefits).12

- What is rationally required from limited agents?

- Answer: The consequentialist framework again!

This is not wordplay. Identifying what limited agents ought to do in reasoning with rational requirements is a key insight behind this strategy.

Familiar from Lord (2017): what you ought to do and what you are rationally required to do are the same thing. Motivation: otherwise, it would be very difficult to answer to the question of why be rational? (Kolodny 2005)

13 In the terms of Carr (2022), the “ought” needs to be normatively robust (i.e. non-conventional and non-seriously context-sensitive).

Interpretive constraint for \(\pi^{\star}\): it should capture genuine standards for normative assessment.13

How can the “ought” of bounded rationality capture genuine norms? → Look at non-conventional standards of success. Example: predictive accuracy.

Example: Fast-and-frugal trees.

Frugal = fewer cues used to make a categorization when compared, for instance, to logistic regression.

Fast = fewer number of (logical or arithmetical) operations when compared to alternatives.

See Martignon, Katsikopoulos, and Woike (2008).

While complex models can be used effectively to fit a dataset, fast-and-frugal heuristics are robust in predicting new cases.

The best reasoning policies are not just a convenient way (among alternatives) to coordinate actions, but they are adapted to predictability of cues. We use them as assessment principles.

This is done by comparing how much the heuristic fits a known set of cases versus how many new cases it predicts. Each heuristic is compared to alternatives to determine which one better performs in prediction and fitting. Too much fitting indicates fitting of noise.

What’s important assessing a reasoning policy for their reliability is not whether the adoption of a reasoning policy succeeds or fails at a given case, but whether they succeed more than they fail across particular adoptions.14

The “ought” of bounded rationality captures features (like predictive accuracy) that matter in the kinds of situations for which certain reasoning policies are adapted.

What limited agents ought to do does provide a deontic ordering. It is just not global: it can be restricted to problem domains.

In sum: simplified reasoning policies that are best at certain kinds of situations are often reliable. This reliability captures why they are normatively robust (why they capture genuine norms). This gives you different options to interpret the verdicts of a model, but they are constrained by ecological considerations: domains where policies normally work.

5. Wrap Up

Where does all of that leave us?

We have a general strategy for assessing agents who simplify their reasoning.

But we need to constrain how we set it up and how we interpret the verdicts.

There is a promising way of reconciling the “ought” of bounded rationality and demanding things that even limited agents are (boundedly) rationally required to do.